The df command tells you the usage of memory details in the Linux system. An understanding of the output is useful for Linux developers. The outputs are Filesystem, 1K-blocks, Used, Available, use%, and mounted-on.

Here is the result of the df command

Filesystem

The filesystem tells you the device name on which the filesystem is stored. Here are the details on the Linux device.

1k-blocks

It tells the total memory available in Kilobytes.

Used

It tells you the used memory in bytes.

Available

It tells the available memory in bytes.

Use%

It tells the used memory in percentage.

Mounted-on

It is simply a directory that is created in the root directory.

Related posts

-

How to Create a Generic Stored Procedure for KPI Calculation (SQL + AWS Lambda)

In modern data engineering, building scalable and reusable systems is essential. Writing separate SQL queries for every KPI quickly becomes messy and hard to maintain. A better approach?👉 Use a Generic Stored Procedure powered by Dynamic SQL, and trigger it using AWS Lambda. In this blog, you’ll learn: What is a Generic Stored Procedure? A…

-

Unlocking the Power of Databricks Genie: A Comprehensive Guide

Databricks Genie is a collaborative data engineering tool built on the Databricks Unified Analytics Platform, enhancing data analytics for businesses. Key features include collaborative workspaces, efficient data processing with Apache Spark, built-in machine learning capabilities, robust data visualization, seamless integration, and strong security measures, fostering informed decision-making.

-

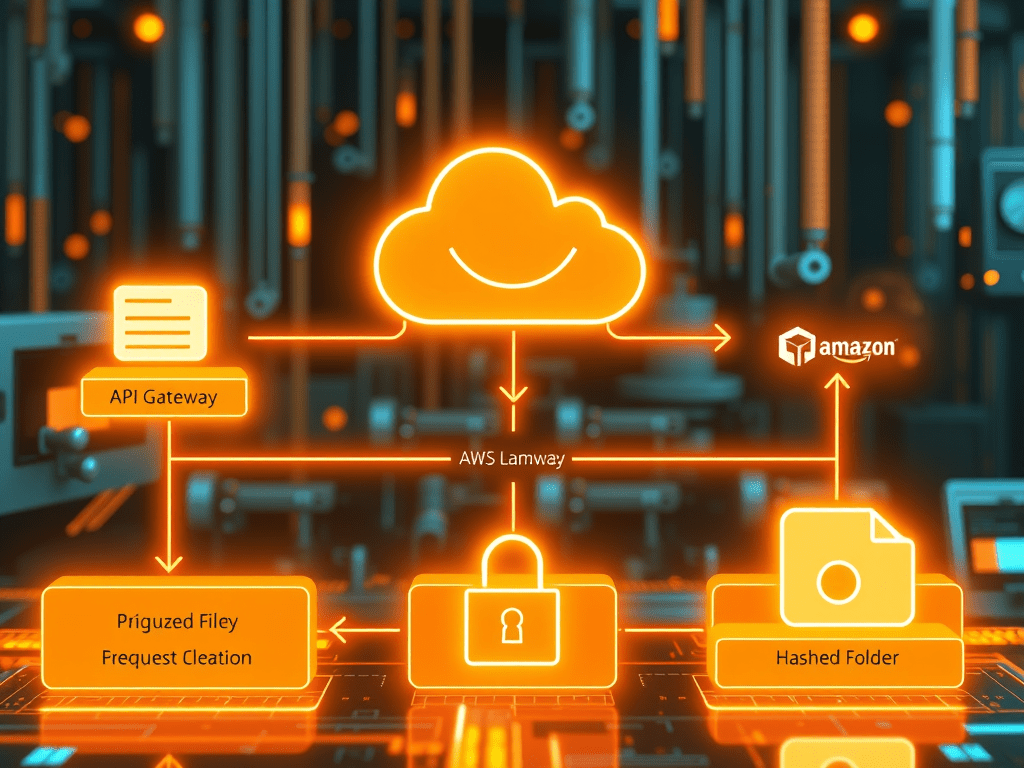

Secure S3 File Upload Using API Gateway, Lambda & PostgreSQL (Complete AWS Architecture Guide

Modern applications often allow users to upload files—documents, invoices, images, or datasets. But a production-grade upload pipeline must be secure, scalable, and well-organized. In this article, we will build a complete end-to-end architecture where: We will implement this using Amazon API Gateway, AWS Lambda, PostgreSQL, and Amazon S3. This architecture is widely used in cloud-native…

You must be logged in to post a comment.