Here is all about Set comprehension in Python and how to use it. In Python, you can simplify the code using comprehension.

Here’s all about Set comprehensions

Before you deep dive into set comprehension, learn these:

- you can’t modify an existing set with comprehension,

- you can only create a new one.

- the comprehension must result in a valid set.

- A set cannot contain multiple entries of the same value( duplicates are not allowed).

1. How the data looks like in Set

Like the dictionary, Python is polite about this. If you try to add values to the set that are already there, it will replace the old one with the new one.

Syntax for Set comprehension

{expression(variable) for variable in input_set [predicate][, …]}

With set comprehension, you can eliminate duplicates. In fact, this is one of the most basic uses of set comprehension.

2. How to work with Set comprehension

Given a list, we can duplicate it as a list with a simple list comprehension like this:

l_copy = [x for x in original_list]

If we change the list comprehension to a set comprehension, we get the same result, but as a set:

my_list_dupes = [5,5,7,8,9,3,4,1,2,3,4,5,6,7,1,2,3]

my_set_wo_dupes = {x for x in my_list_dupes}

print(my_set_wo_dupes)

{1, 2, 3, 4, 5, 6, 7, 8, 9}

** Process exited - Return Code: 0 **

Press Enter to exit terminal

References

More Srinimf

-

How to Create a Generic Stored Procedure for KPI Calculation (SQL + AWS Lambda)

In modern data engineering, building scalable and reusable systems is essential. Writing separate SQL queries for every KPI quickly becomes messy and hard to maintain. A better approach?👉 Use a Generic Stored Procedure powered by Dynamic SQL, and trigger it using AWS Lambda. In this blog, you’ll learn: What is a Generic Stored Procedure? A…

-

Unlocking the Power of Databricks Genie: A Comprehensive Guide

Databricks Genie is a collaborative data engineering tool built on the Databricks Unified Analytics Platform, enhancing data analytics for businesses. Key features include collaborative workspaces, efficient data processing with Apache Spark, built-in machine learning capabilities, robust data visualization, seamless integration, and strong security measures, fostering informed decision-making.

-

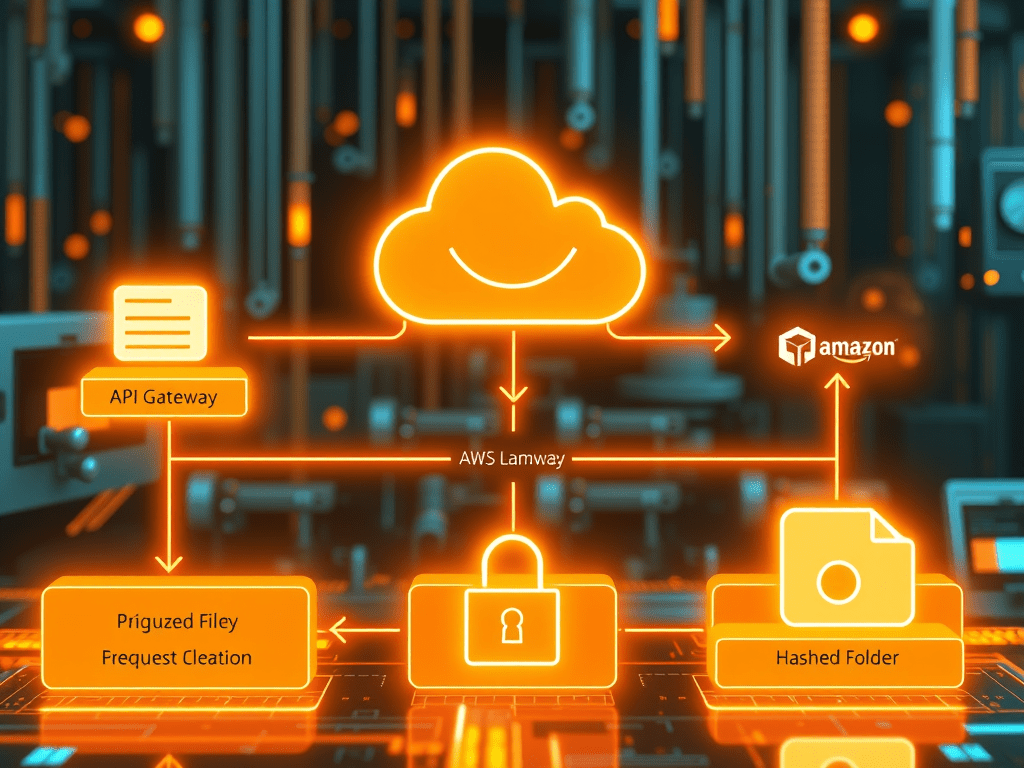

Secure S3 File Upload Using API Gateway, Lambda & PostgreSQL (Complete AWS Architecture Guide

Modern applications often allow users to upload files—documents, invoices, images, or datasets. But a production-grade upload pipeline must be secure, scalable, and well-organized. In this article, we will build a complete end-to-end architecture where: We will implement this using Amazon API Gateway, AWS Lambda, PostgreSQL, and Amazon S3. This architecture is widely used in cloud-native…