Modern applications often allow users to upload files—documents, invoices, images, or datasets. But a production-grade upload pipeline must be secure, scalable, and well-organized.

In this article, we will build a complete end-to-end architecture where:

- A frontend user submits data

- An API receives the request

- The system validates the request against a database

- A hashed folder key is generated

- The file is securely uploaded to an object storage system

We will implement this using Amazon API Gateway, AWS Lambda, PostgreSQL, and Amazon S3.

This architecture is widely used in cloud-native applications and data platforms.

The Problem We Want to Solve

Imagine a web application where users upload files associated with:

- Customer

- Site

If files are stored directly in a bucket without structure, the storage becomes messy.

Example (bad design):

s3://uploads-bucket/ invoice1.csv invoice2.csv contract.pdf

Instead, we want a unique folder per customer + site combination.

To achieve this, we generate a hashed folder key using:

MD5(customer_id + "-" + site_id)

Example:

customer_id = 101site_id = 22hash = 7e4c8b6a9adfbc13

Files will be stored as:

s3://uploads-bucket/7e4c8b6a9adfbc13/invoice.csv

This approach ensures:

- clean structure

- deterministic folder naming

- no exposure of business IDs

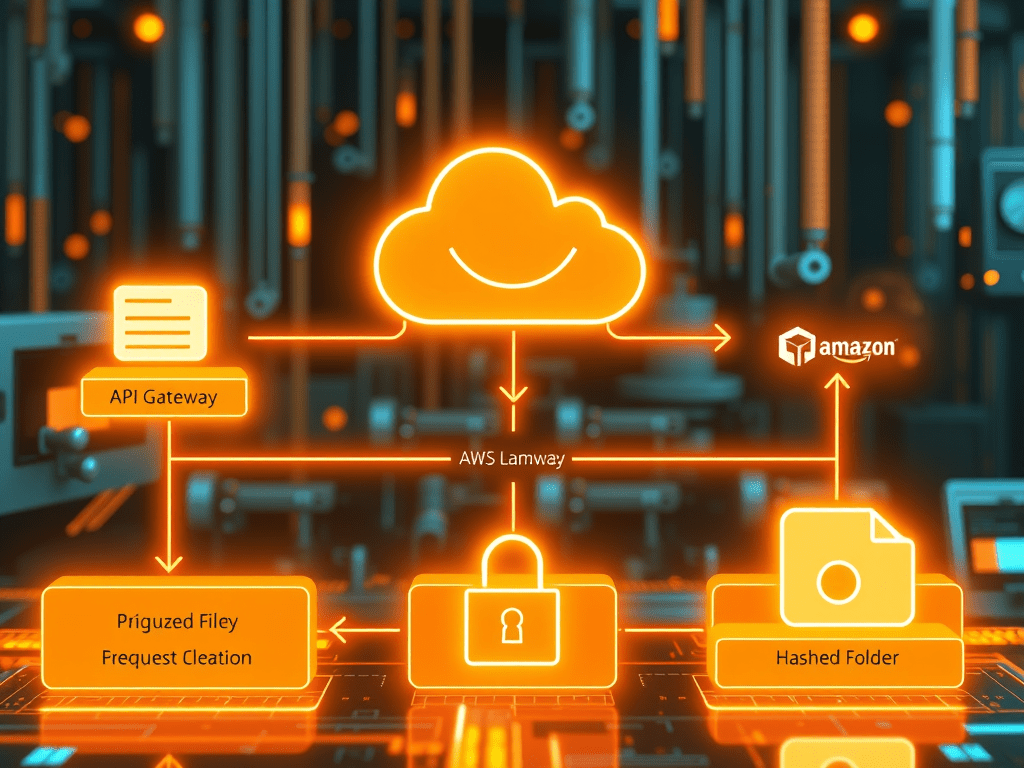

High-Level Architecture

The full system architecture looks like this:

Frontend Application │ │ HTTP Request ▼API Gateway │ ▼Lambda Function │ │ Validate customer + site ▼PostgreSQL Database │ │ Generate MD5 folder ▼Lambda generates S3 Presigned URL │ ▼Frontend uploads file │ ▼Amazon S3 bucket

Key benefits of this design:

- database security

- scalable serverless backend

- direct upload to S3 without routing files through backend servers

Step 1: User Enters Data in Frontend

A user uploads a file from the web application. The frontend collects:

- customer_id

- site_id

- file_name

Example request payload:

{ "customer_id": 101, "site_id": 22, "file_name": "invoice.csv"}

The frontend sends this request to an API endpoint:

POST /generate-upload-url

Step 2: API Gateway Receives Request

The request first reaches Amazon API Gateway.

API Gateway acts as the entry point to your backend services.

It performs:

- authentication

- request validation

- routing to backend compute

In this case, the route is configured as:

POST /generate-upload-url

This route triggers a backend Lambda function.

Step 3: Lambda Processes the Request

The request is then handled by AWS Lambda.

Lambda is responsible for:

- validating customer and site

- generating the hashed folder

- creating a presigned upload URL

Example Lambda logic:

import jsonimport hashlibimport boto3import psycopg2s3 = boto3.client("s3")def lambda_handler(event, context): body = json.loads(event["body"]) customer_id = body["customer_id"] site_id = body["site_id"] file_name = body["file_name"] folder_key = hashlib.md5( f"{customer_id}-{site_id}".encode() ).hexdigest() s3_key = f"{folder_key}/{file_name}"

This generates a unique hashed folder.

Step 4: Validate Customer and Site in PostgreSQL

Before generating the upload link, we must ensure the customer and site exist.

Lambda queries PostgreSQL.

Example SQL query:

SELECT c.customer_id, s.site_idFROM customer cJOIN site sON c.customer_id = s.customer_idWHERE c.customer_id = %sAND s.site_id = %s;

If the record does not exist, the API returns an error.

This step ensures:

- valid customer

- valid site

- no unauthorized uploads

Step 5: Generate S3 Presigned Upload URL

Instead of sending files through the backend, Lambda generates a presigned upload URL.

This allows the frontend to upload directly to Amazon S3.

Example code:

url = s3.generate_presigned_url( "put_object", Params={ "Bucket": "customer-upload-bucket", "Key": s3_key }, ExpiresIn=3600)

The URL is valid for 1 hour.

Step 6: API Response to Frontend

Lambda returns:

{ "upload_url": "https://s3-presigned-url", "folder_key": "7e4c8b6a9adfbc13", "s3_key": "7e4c8b6a9adfbc13/invoice.csv"}

Now the frontend knows exactly where to upload the file.

Step 7: Frontend Uploads File to S3

The frontend performs an HTTP PUT request:

PUT https://s3-presigned-url

File content is sent directly to S3.

Resulting object:

s3://customer-upload-bucket/

7e4c8b6a9adfbc13/

invoice.csv

This architecture prevents backend servers from handling file uploads.

Why Use Hash-Based Folder Keys?

Hash-based folders provide several advantages:

1. Clean Storage Structure

bucket/ 7e4c8b6a9a/ 2ab881ef12/ 98cc112ab1/

2. Obfuscation

Customer IDs are not exposed in S3 paths.

3. Deterministic Mapping

Same customer + site → same folder.

4. Scalable File Organization

Works well even with millions of files.

Security Best Practices

When implementing this architecture:

Never Expose Database to Frontend

Frontend must not connect directly to PostgreSQL.

Always go through API.

Use Presigned URLs

This prevents:

- credential exposure

- unauthorized uploads

Validate IDs

Always verify:

customer_id

site_id

before generating upload URL.

Use IAM Permissions

Limit Lambda role permissions to only:

s3:PutObject

for the required bucket.

Final Architecture Overview

User uploads file │ ▼Frontend application │ ▼API Gateway endpoint │ ▼Lambda backend │ │ Validate customer + site ▼PostgreSQL │ │ Generate MD5 folder ▼Lambda generates presigned URL │ ▼Frontend uploads file │ ▼Amazon S3 bucket

This architecture is secure, scalable, and cloud-native.

Conclusion

Building a robust upload system requires more than simply storing files. By combining:

- API-driven architecture

- serverless compute

- database validation

- hash-based folder organization

- direct S3 uploads

you can build a highly scalable and secure solution.

Using Amazon API Gateway, AWS Lambda, PostgreSQL, and Amazon S3, organizations can implement a production-grade file upload pipeline with minimal infrastructure management.

This design pattern is widely used in modern cloud data platforms, SaaS applications, and enterprise systems.