Introduction

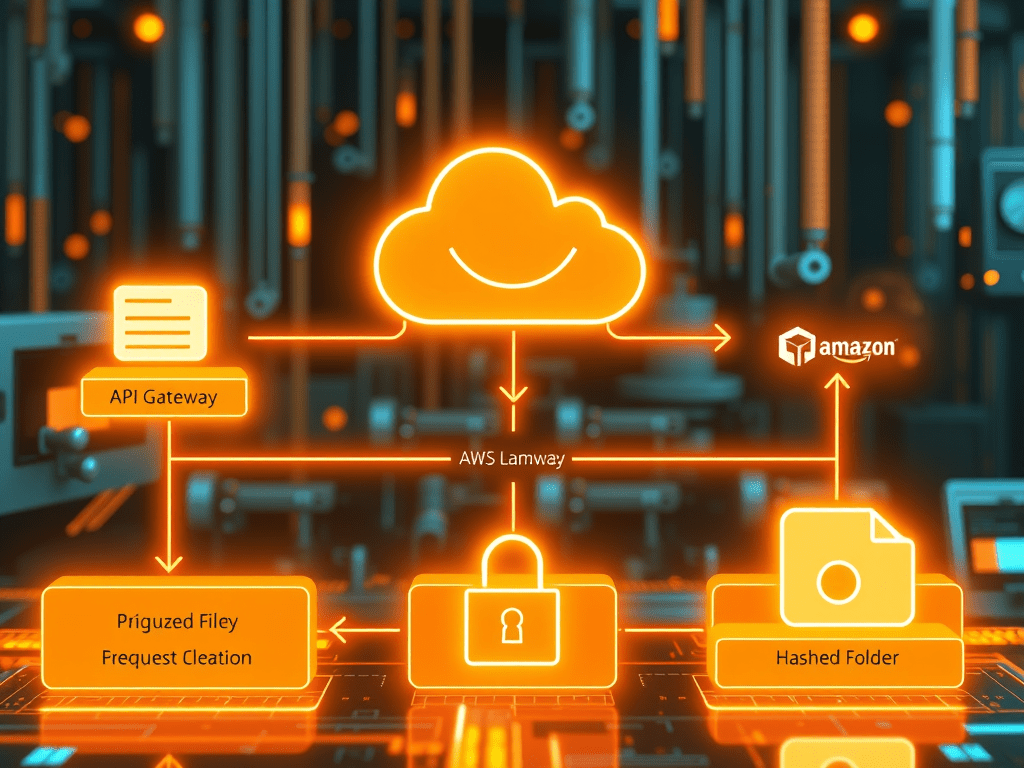

One common challenge beginners face while working with AWS Glue is handling S3 paths that contain dynamically changing folder names.

This usually happens when files are written by applications, APIs, Lambda functions, or ETL jobs that generate hash-based or UUID-based folders for every run.

For example, your S3 path may look like this:

s3://my-bucket/output/

8d3f1a92/result/

c2b7e5f1/result/

9af1b2c8/result/

Here, the middle folder (8d3f1a92, c2b7e5f1) changes dynamically every execution.

Because of this, Glue crawler may:

- create multiple unwanted partitions

- fail to create a clean table

- treat the hash folder as part of the schema

In this blog, we’ll understand why this issue happens and how to fix it properly.

Understanding the Problem

Let’s say your actual folder structure is:

s3://project-bucket/results/

123abc456/result/data.parquet

789xyz111/result/data.parquet

The folder names:

123abc456789xyz111

are dynamic variables

These may be:

- request IDs

- hash values

- UUIDs

- timestamp-based folders

The issue is that the crawler expects stable folder structures.

When you crawl this path:

s3://project-bucket/results/

Glue scans every level and may treat the dynamic folder as a partition column.

That makes querying difficult.

Why This Happens in AWS Glue

AWS Glue crawler works best with predictable folder hierarchies.

For example:

s3://bucket/sales/year=2026/month=04/

This is perfect because:

- folder names are meaningful

- partitions are stable

But dynamic folders like:

s3://bucket/output/9a8b7c/result/

have no business meaning.

So Glue doesn’t know what to do with them.

Best Solution: Use Wildcard Path Pattern

This is the easiest and most effective solution.

Instead of creating the table from the top-level path, create it using a wildcard.

Use this path:

s3://project-bucket/results/*/result/

The * means:

“ignore any folder name at this level”

This automatically handles changing folder names.

Step-by-Step Fix

Step 1: Identify the Stable Folder Pattern

Example actual paths:

s3://project-bucket/results/abc123/result/

s3://project-bucket/results/def456/result/

s3://project-bucket/results/ghi789/result/

Common stable pattern:

results/*/result/

Step 2: Create Table Using Athena

Open Amazon Athena and run:

CREATE EXTERNAL TABLE result_table (

customer_id string,

amount double,

created_date string

)

STORED AS PARQUET

LOCATION 's3://project-bucket/results/*/result/';

This creates one clean table.

Step 3: Validate the Data

Run:

SELECT * FROM result_table LIMIT 10;

Now Athena reads data from all dynamic folders.

Alternative Fix: Create a Curated Folder

For production systems, this is the best practice.

Instead of querying dynamic folders directly, move data into a clean folder.

Example:

raw/

abc123/result/

xyz456/result/curated/

result/

How to Do This Using Glue Job

Use a Glue PySpark job:

from pyspark.sql import SparkSessionspark = SparkSession.builder.getOrCreate()df = spark.read.parquet("s3://project-bucket/results/*/result/")df.write.mode("overwrite").parquet(

"s3://project-bucket/curated/result/"

)

Now your crawler can safely crawl:

s3://project-bucket/curated/result/

This gives a stable table.

Why This Is Better

Benefits of curated path:

- no dynamic folder confusion

- easy crawler setup

- faster queries

- better performance

- easier debugging

This is the standard approach used in real projects.

Common Beginner Mistake

Many beginners try this:

s3://project-bucket/results/

This causes:

- messy partitions

- incorrect schema

- multiple tables

Avoid crawling unstable paths.

Best Practice Recommendation

Use this approach:

Raw Layer

results/hash/result/Processing Layer

Glue JobCurated Layer

curated/result/

This is a professional data engineering design.

Final Conclusion

If your S3 path contains dynamically changing variables such as hashes, UUIDs, or run IDs, do not directly crawl the parent folder.

Instead, use one of these approaches:

Quick Fix

Use wildcard path:

*/result/

Production Fix

Move data to curated folder and crawl that path.

This keeps your Glue tables clean and query-ready.

Start Discussion