I shared two different methods to execute(run) only one step in a Job. For example, you have a JCL with 10 steps and you want to execute only one step. Then, to do this just follow the below two ideas.

Do you want to know Batch SPUFI?

How to Execute SPUFI from JCL

Sample JCL you need to execute SPUFI from batch JCL (job).

Here, I want to execute only step 5. How can I do it?

- One way is to use RESTART from STEP05. But the problem is it tries to execute subsequent steps. Insert the null statement after STEP05 to prevent the execution of subsequent steps.

- But one decent way is you don’t need to touch the code in job steps, just alter only Job card. In the JOB CARD use the COND parameter. When the Job is executed, only the Step mentioned in the RESTART parameter will get executed. e.g., RESTART=STEP05, COND= (0, LE)

Recent Posts

Unlocking the Power of Databricks Genie: A Comprehensive Guide

Databricks Genie is a collaborative data engineering tool built on the Databricks Unified Analytics Platform, enhancing data analytics for businesses. Key features include collaborative workspaces, efficient data processing with Apache Spark, built-in machine learning capabilities, robust data visualization, seamless integration, and strong security measures, fostering informed decision-making.

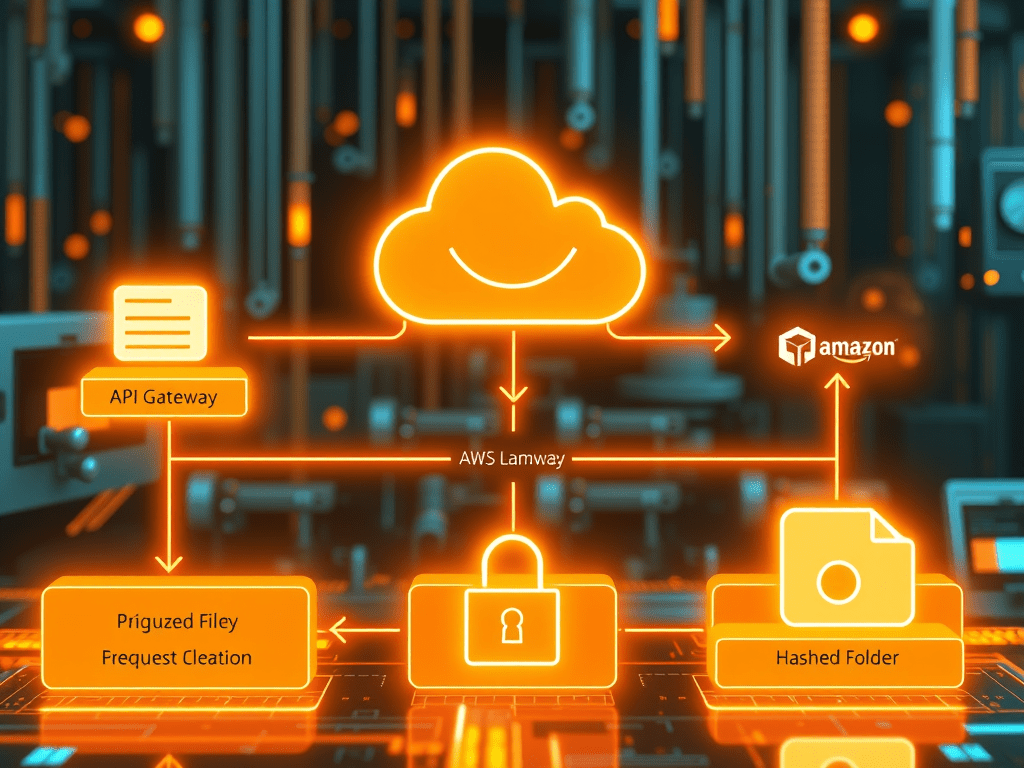

Secure S3 File Upload Using API Gateway, Lambda & PostgreSQL (Complete AWS Architecture Guide

Modern applications often allow users to upload files—documents, invoices, images, or datasets. But a production-grade upload pipeline must be secure, scalable, and well-organized. In this article, we will build a complete end-to-end architecture where: We will implement this using Amazon API Gateway, AWS Lambda, PostgreSQL, and Amazon S3. This architecture is widely used in cloud-native…

AI Agents in Data Engineering: Everything You Need to Know

AI agents are revolutionizing data engineering by automating tasks such as monitoring pipelines, generating SQL queries, and ensuring data quality. They enhance productivity, speed up troubleshooting, and improve data accessibility for users. While offering significant advantages, AI agents also face challenges in security, accuracy, and integration with existing systems.

You must be logged in to post a comment.