Use pip or apt-get to install BeautifulSoup in Python. Fix errors during installation by following commands provided here.

Install the BeautufulSoup parser in Linux python easily by giving the below commands.

Method:1

$ apt-get install python3-bs4 (for Python 3)Method:2

$ pip install beautifulsoup4Note: If you don’t have easy_install or pip installed

$ python setup.py install

How to Fix Syntax Error After Installation

Here it is about setup.py.

$ python3 setup.py install

or,

convert Python2 code to Python3 code

$ 2to3-3.2 -w bs4How to install lxml

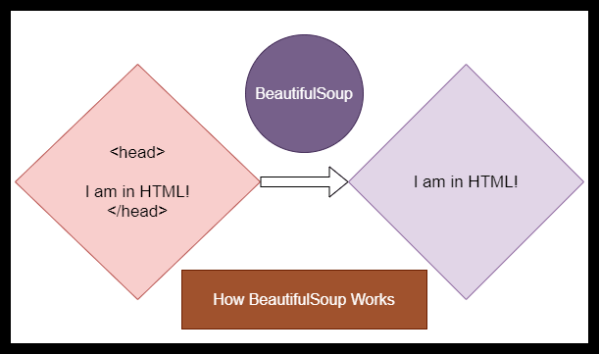

BeautifulSoup is a standard parser in Python3 for HTML tags. You can also download additional parser.

$ apt-get install python-lxml

or

$ easy_install lxml

or

$ pip install lxmlHow to Install html5lib

$ apt-get install python-html5lib

or

$ easy_install html5lib

or

$ pip install html5lib

How do I Remove HTML Tags in Web data

You have supplied two arguments for BeautifulSoup. One is fp and the other one is html.parser. Here, the parsing method is html.parser. You can also use xml.parser.

Python Code

from bs4 import BeautifulSoup

with open("index.html") as fp:

soup = BeautifulSoup(fp, 'html.parser')

soup = BeautifulSoup("<html>a web page</html>", 'html.parser')

print(BeautifulSoup("

<html>

<head>

</head>

<body>

<p>

Here's a paragraph of text!

</p>

<p>

Here's a second paragraph of text!

a</body>

</html>", "html.parser"))The Output

Here's a paragraph of text!

Here's a second paragraph of text!You May Also Like: BeautifulSoup Tutorial

Latest from the Blog

Linking Words Practice: Improve Your English Speaking Fluency Naturally

Learn how to use linking words in English speaking with practical examples. Improve your fluency, communication skills, and confidence using transition words for conversations, presentations, and interviews.

Databricks DLT with @dp: A Complete Guide to Streaming and Batch Processing

Learn how to use Databricks Lakeflow Declarative Pipelines with @dp for streaming tables and materialized views. Includes architecture, examples, and deployment steps.

AI Agents for Beginners: Everything You Need to Know

Learn what AI agents are, how they work, their benefits, use cases, frameworks, and future trends in this complete beginner-friendly guide.

12 Top Python Coding Interview Questions

Useful for your next interview.

You must be logged in to post a comment.