Here’re nine top Kafka advanced interview questions useful for experienced professionals.

Kafka interview questions for experienced professionals

1.What is the name of JSON parser in Kafka

The KafkaJsonSchema Serializer parses the JSON messages to strings. KafkaJsonSchema Desrializer converts strings to JSON format.

2. What is QueueFullExecption?

It occurs when the Broker cannot handle the message sent by the Producer. The resolution is you need to add more brokers. Here is more on Broker, Partition and Consumer.

In a single node model, you need to have more than one server. Properties file. Then each file has a definition for a broker-id.

Here’s example:

broker.id=1

port=9093

log.dir=/tmp/kafka-logs-1Way to start Kafka Server:

srini@localhost kafka_2.9.2-0.8.1.1]# bin/kafka-server-start.sh config/server-1.properties

3. Can we have multiple consumers in a Consumer group?

Yes, you can have. The thumb rule is; each consumer can associate with one partition. If the consumers are more than the partitions, the excess consumers in the group become idle. So ensure optimum consumers in a consumer group.

4. Can we decrease the partitions once we added?

No, you cannot decrease. However, you can increase the partitions.

5. What is the way to find number of topics in a broker?

You can use list command to show list of topics in a broker. And, you can use describe command to see details of topics. Here’re examples on how to use list and describe.

6. How to add additional partition?

You can change the server.properties, to increase partitions. The num.partitions will tell the number of partitions in that broker. However, the default is ‘1’

7. What are the different APIs in Kafka?

You can find four APIs. Those are

- Producer API

- Consumer API

- Connect Source API

- Connect Sink API

- Streams API

- Admin API

- KSQL

8. How to write Data from Kaka to a Database?

There are two differing frameworks. Those are “Connect Source” and “Connect Sink”. You can import data from source databases and export topics from Kafka to external databases. Here’s the real usage of Connector APIs.

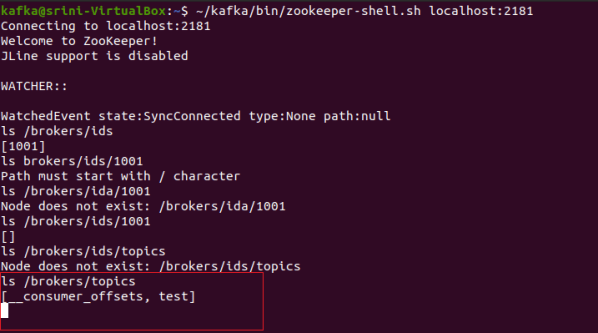

9. How to verify where the zookeeper stores information about Broker id and Offset numbers?

Here is the command to check both metadata of offset and offsets. The offsets are stored in Zookeeper in the in _consumer_offsets topic.

$ kafka-console-consumer.sh --consumer.config /tmp/consumer.config

--formatter "GroupMetaDataManager\$offsetsMessageFormatter"

--zookeeper : --topic _consumer_offsetsThe above command gives metadata of console consumer.

Related Posts

How to Use Python Dictionary Lookup

Here’s a complete explanation with an example of how to use Lookup in Python Dictionary. Useful for your python projects.

You must be logged in to post a comment.