There are two types of PL/SQL Procedures. Those are named and unnamed. The Named procs you can use for the stand-alone task. You can use unnamed procs for ad-hoc work and in the Shell scripts.

Anonymous Block (Unnamed)

The procedure which we don’t give is called the Anonymous procedure. There is no name over here for the procedure.

Read: How to execute a stored procedure in SQL developer

[DECLARE … optional declaration statements …] BEGIN … executable statements … [EXCEPTION … optional exception handler statements …] END;

Sample anonymous block

Here’s an example of Anonymous PL/SQL code.

SET SERVEROUTPUT ON;

DECLARE

V_MYNUMBER NUMBER(2) := 1;

BEGIN

DBMS_OUTPUT.PUT.LINE('MY INPUT IS : ' V_NUMBER);

END;

Named PL/SQL block

Here is an example named PL/SQL block. Here the name of the procedure is pl.

Read: How to write Lookup query in PL/SQL

create or replace PROCEDURE pl(aiv_text in varchar2 )

is

begin

DBMS_OUTPUT.put_line(aiv_text);

end;

/

execute pl('my input srini');

drop procedure pl;

Here are key takeout

- The differences in the named PL/SQL block are it has the syntax of ‘CREATE or REPLACE PROCEDURE’ and IS.

- Variables are declared inside after the procedure name.

- The execute the command you can use to call the procedure. The drop procedure command you can use to drop it.

Read: How to write UDF to check input value is number or not

Recent posts

-

How to Create a Generic Stored Procedure for KPI Calculation (SQL + AWS Lambda)

In modern data engineering, building scalable and reusable systems is essential. Writing separate SQL queries for every KPI quickly becomes messy and hard to maintain. A better approach?👉 Use a Generic Stored Procedure powered by Dynamic SQL, and trigger it using AWS Lambda. In this blog, you’ll learn: What is a Generic Stored Procedure? A…

-

Unlocking the Power of Databricks Genie: A Comprehensive Guide

Databricks Genie is a collaborative data engineering tool built on the Databricks Unified Analytics Platform, enhancing data analytics for businesses. Key features include collaborative workspaces, efficient data processing with Apache Spark, built-in machine learning capabilities, robust data visualization, seamless integration, and strong security measures, fostering informed decision-making.

-

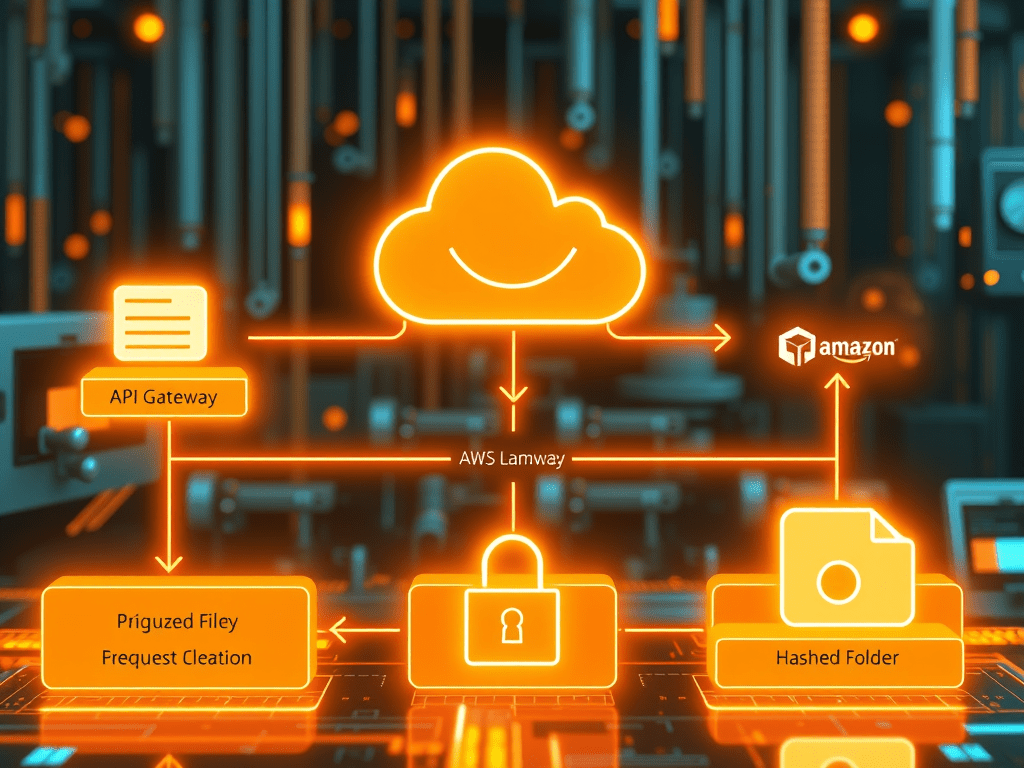

Secure S3 File Upload Using API Gateway, Lambda & PostgreSQL (Complete AWS Architecture Guide

Modern applications often allow users to upload files—documents, invoices, images, or datasets. But a production-grade upload pipeline must be secure, scalable, and well-organized. In this article, we will build a complete end-to-end architecture where: We will implement this using Amazon API Gateway, AWS Lambda, PostgreSQL, and Amazon S3. This architecture is widely used in cloud-native…